I’m not sure whether I’m more impressed by the technical proficiency of the software developers behind ChatGPT — or if I’m more alarmed by the dystopian future that I see such software leading to.

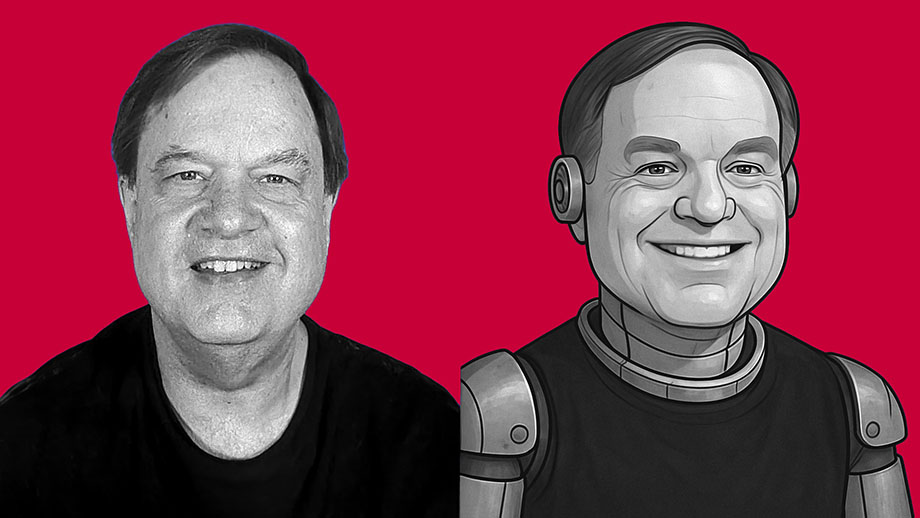

What we call “artificial intelligence” is nothing but software. It isn’t intelligent. It has no consciousness. It has no actual awareness or understanding of what it produces. It’s just lines of computer code written to produce material that mimics human behavior. If you think of AI as some form of semi-consciousness, you’re buying into science fiction. This is nothing but software written by clever people — and it’s nowhere near as “smart” as you’ve been led to believe.

But AI software — such as ChatGPT and its competitors — is getting better and better at spitting out content that mimics what a human might have created with real thought. And I think this is dangerous.

As an experiment, I asked ChatGPT to create an essay in my own writing style. I didn’t give it a subject. This is the only instructions I gave the software: “Write an 800-word essay in the same style used by the writer of davidmcelroy.org.”

The results shocked me.

Do you believe you’re free? Slavery by any other name is still slavery

Do you believe you’re free? Slavery by any other name is still slavery Fear of Big Brother: What good are rights if you’re afraid to use them?

Fear of Big Brother: What good are rights if you’re afraid to use them?

Why do we stay in prison when there’s no lock holding us there?

Why do we stay in prison when there’s no lock holding us there? Dead man’s watch always there to remind me of my own mortality

Dead man’s watch always there to remind me of my own mortality Barack Obama’s effort to imitate FDR’s ’36 campaign full of danger

Barack Obama’s effort to imitate FDR’s ’36 campaign full of danger

Goodbye, Amelia (2000-2013)

Goodbye, Amelia (2000-2013) If you live in Hawaii and want to see my film on TV, public access is coming your way with it soon

If you live in Hawaii and want to see my film on TV, public access is coming your way with it soon Promises from childhood don’t always serve our needs today

Promises from childhood don’t always serve our needs today