I’m not sure whether I’m more impressed by the technical proficiency of the software developers behind ChatGPT — or if I’m more alarmed by the dystopian future that I see such software leading to.

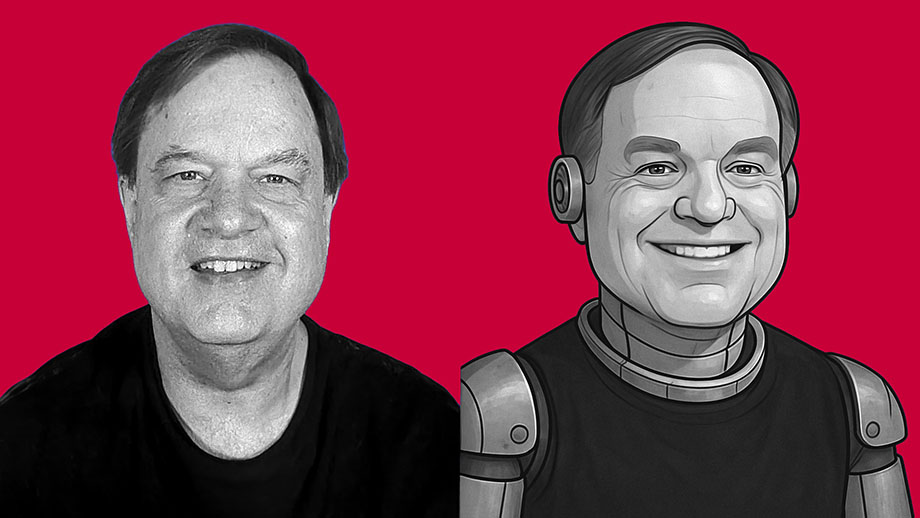

What we call “artificial intelligence” is nothing but software. It isn’t intelligent. It has no consciousness. It has no actual awareness or understanding of what it produces. It’s just lines of computer code written to produce material that mimics human behavior. If you think of AI as some form of semi-consciousness, you’re buying into science fiction. This is nothing but software written by clever people — and it’s nowhere near as “smart” as you’ve been led to believe.

But AI software — such as ChatGPT and its competitors — is getting better and better at spitting out content that mimics what a human might have created with real thought. And I think this is dangerous.

As an experiment, I asked ChatGPT to create an essay in my own writing style. I didn’t give it a subject. This is the only instructions I gave the software: “Write an 800-word essay in the same style used by the writer of davidmcelroy.org.”

The results shocked me.

Feeling abandoned by a parent often sets pattern for entire life

Feeling abandoned by a parent often sets pattern for entire life Faith is our only assurance that rebirth will come again in spring

Faith is our only assurance that rebirth will come again in spring He couldn’t mold her into himself, but my dad broke Mother’s spirit

He couldn’t mold her into himself, but my dad broke Mother’s spirit

Reality frequently doesn’t match fantasy when you know full story

Reality frequently doesn’t match fantasy when you know full story Why did we slowly let them strip our neighborhoods of most trees?

Why did we slowly let them strip our neighborhoods of most trees? After long but necessary detours, the beginning finally nears for me

After long but necessary detours, the beginning finally nears for me

There’s a lot to complain about, but miracle is so much goes right

There’s a lot to complain about, but miracle is so much goes right Next, this city is going to be selling lemonade and holding bake sales

Next, this city is going to be selling lemonade and holding bake sales Modern weddings seem designed to conceal reality of relationships

Modern weddings seem designed to conceal reality of relationships