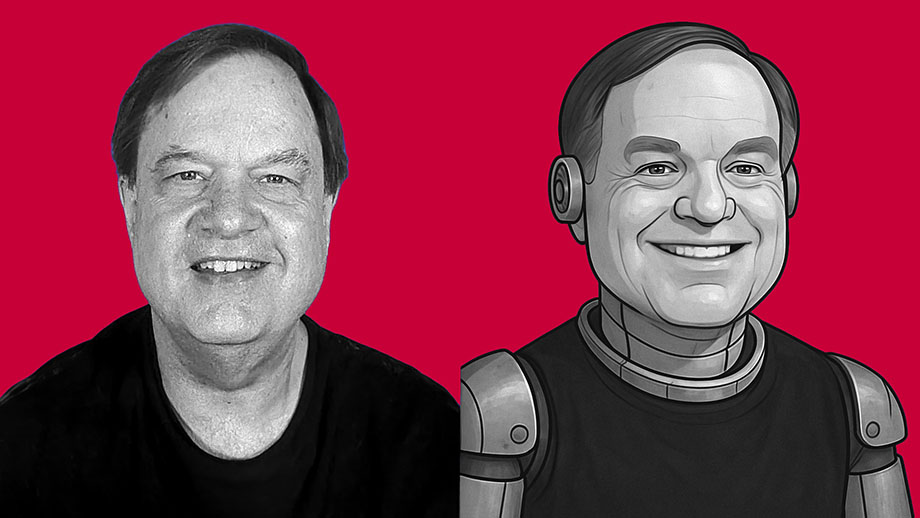

I’m not sure whether I’m more impressed by the technical proficiency of the software developers behind ChatGPT — or if I’m more alarmed by the dystopian future that I see such software leading to.

What we call “artificial intelligence” is nothing but software. It isn’t intelligent. It has no consciousness. It has no actual awareness or understanding of what it produces. It’s just lines of computer code written to produce material that mimics human behavior. If you think of AI as some form of semi-consciousness, you’re buying into science fiction. This is nothing but software written by clever people — and it’s nowhere near as “smart” as you’ve been led to believe.

But AI software — such as ChatGPT and its competitors — is getting better and better at spitting out content that mimics what a human might have created with real thought. And I think this is dangerous.

As an experiment, I asked ChatGPT to create an essay in my own writing style. I didn’t give it a subject. This is the only instructions I gave the software: “Write an 800-word essay in the same style used by the writer of davidmcelroy.org.”

The results shocked me.

Loss of cultural consensus means violent conflict in decades ahead

Loss of cultural consensus means violent conflict in decades ahead All of nature listens to gut instinct, but humans often ignore that voice

All of nature listens to gut instinct, but humans often ignore that voice A bully picked a fight that night — and now I’m dreaming about it

A bully picked a fight that night — and now I’m dreaming about it

Want to feel happier, healthier? Try cutting back on your deceit

Want to feel happier, healthier? Try cutting back on your deceit Christmas stands for quiet truths: love, faith, community and family

Christmas stands for quiet truths: love, faith, community and family Trust and spontaneous order don’t require heavy hand of the state

Trust and spontaneous order don’t require heavy hand of the state

I don’t understand YouTube fame, but I’m drawn toward it anyway

I don’t understand YouTube fame, but I’m drawn toward it anyway How much of what we do is driven by our unconscious social scripts?

How much of what we do is driven by our unconscious social scripts? Are you living the life you wanted when everything seemed possible?

Are you living the life you wanted when everything seemed possible?