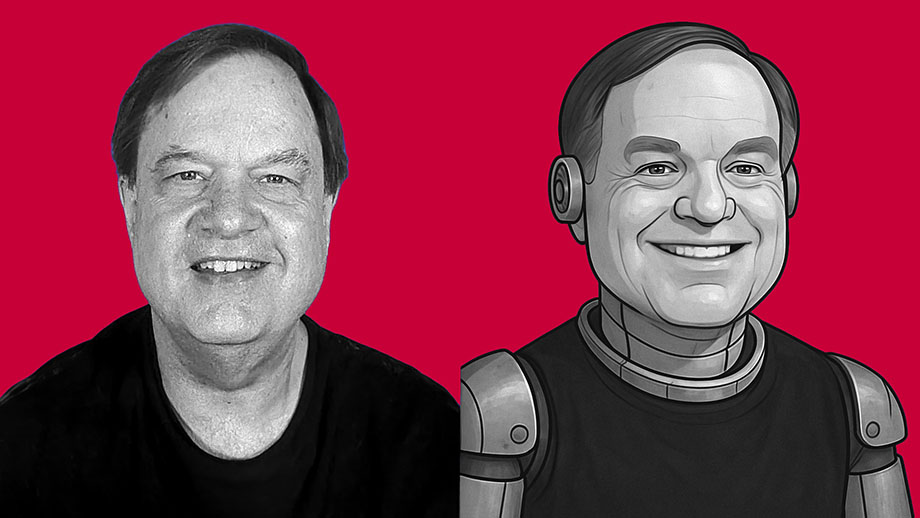

I’m not sure whether I’m more impressed by the technical proficiency of the software developers behind ChatGPT — or if I’m more alarmed by the dystopian future that I see such software leading to.

What we call “artificial intelligence” is nothing but software. It isn’t intelligent. It has no consciousness. It has no actual awareness or understanding of what it produces. It’s just lines of computer code written to produce material that mimics human behavior. If you think of AI as some form of semi-consciousness, you’re buying into science fiction. This is nothing but software written by clever people — and it’s nowhere near as “smart” as you’ve been led to believe.

But AI software — such as ChatGPT and its competitors — is getting better and better at spitting out content that mimics what a human might have created with real thought. And I think this is dangerous.

As an experiment, I asked ChatGPT to create an essay in my own writing style. I didn’t give it a subject. This is the only instructions I gave the software: “Write an 800-word essay in the same style used by the writer of davidmcelroy.org.”

The results shocked me.

Winners and losers: After Iowa, where do GOP candidates stand?

Winners and losers: After Iowa, where do GOP candidates stand? What if most money spent for university degrees is useless?

What if most money spent for university degrees is useless? What is your measure of success? For me, meaning keeps changing

What is your measure of success? For me, meaning keeps changing

Why do Birmingham taxpayers give $500,000 yearly to college sports?

Why do Birmingham taxpayers give $500,000 yearly to college sports? Some people hate their enemies so badly that fairness doesn’t matter

Some people hate their enemies so badly that fairness doesn’t matter In ’98, Ron Paul warned U.S. policy was leading to terrorist attacks

In ’98, Ron Paul warned U.S. policy was leading to terrorist attacks

Going back to fundamentals gets me closer to the quality I want

Going back to fundamentals gets me closer to the quality I want My love of ‘fur friends’ stems from the callousness I saw in my father

My love of ‘fur friends’ stems from the callousness I saw in my father Photo assignment in dimly lit gym kickstarted my love for basketball

Photo assignment in dimly lit gym kickstarted my love for basketball