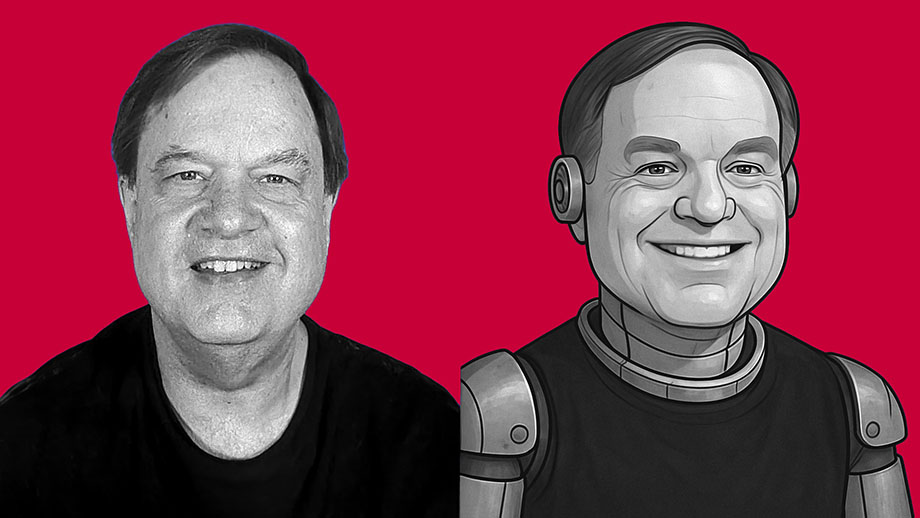

I’m not sure whether I’m more impressed by the technical proficiency of the software developers behind ChatGPT — or if I’m more alarmed by the dystopian future that I see such software leading to.

What we call “artificial intelligence” is nothing but software. It isn’t intelligent. It has no consciousness. It has no actual awareness or understanding of what it produces. It’s just lines of computer code written to produce material that mimics human behavior. If you think of AI as some form of semi-consciousness, you’re buying into science fiction. This is nothing but software written by clever people — and it’s nowhere near as “smart” as you’ve been led to believe.

But AI software — such as ChatGPT and its competitors — is getting better and better at spitting out content that mimics what a human might have created with real thought. And I think this is dangerous.

As an experiment, I asked ChatGPT to create an essay in my own writing style. I didn’t give it a subject. This is the only instructions I gave the software: “Write an 800-word essay in the same style used by the writer of davidmcelroy.org.”

The results shocked me.

Federal debt default? So what? It happened before — in 1979

Federal debt default? So what? It happened before — in 1979 With space shuttle finally dead, free market can do better job in space

With space shuttle finally dead, free market can do better job in space Old documents force me to rethink things I’ve believed about my father

Old documents force me to rethink things I’ve believed about my father

Despite death, finally finding love made life worth it for new widow

Despite death, finally finding love made life worth it for new widow For governance, ‘one size fits all’ is a bad idea — even if the ‘one size’ is your version of freedom

For governance, ‘one size fits all’ is a bad idea — even if the ‘one size’ is your version of freedom As we enjoyed the sunset together, language and borders didn’t matter

As we enjoyed the sunset together, language and borders didn’t matter

Emptiness can bring panic that feels like being stalked by fear

Emptiness can bring panic that feels like being stalked by fear Is this what happens when you teach children there are no absolutes?

Is this what happens when you teach children there are no absolutes? Little blonde cousins are sometimes perfect antidote for life’s bleak days

Little blonde cousins are sometimes perfect antidote for life’s bleak days