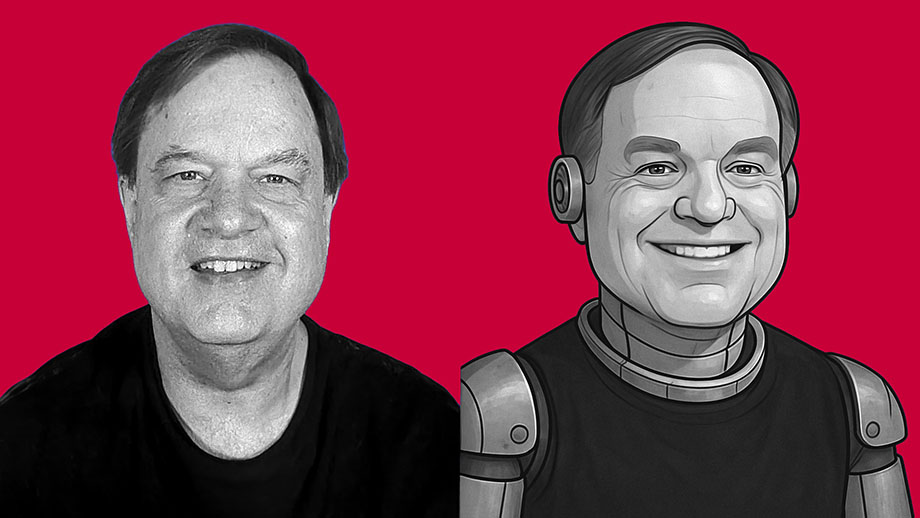

I’m not sure whether I’m more impressed by the technical proficiency of the software developers behind ChatGPT — or if I’m more alarmed by the dystopian future that I see such software leading to.

What we call “artificial intelligence” is nothing but software. It isn’t intelligent. It has no consciousness. It has no actual awareness or understanding of what it produces. It’s just lines of computer code written to produce material that mimics human behavior. If you think of AI as some form of semi-consciousness, you’re buying into science fiction. This is nothing but software written by clever people — and it’s nowhere near as “smart” as you’ve been led to believe.

But AI software — such as ChatGPT and its competitors — is getting better and better at spitting out content that mimics what a human might have created with real thought. And I think this is dangerous.

As an experiment, I asked ChatGPT to create an essay in my own writing style. I didn’t give it a subject. This is the only instructions I gave the software: “Write an 800-word essay in the same style used by the writer of davidmcelroy.org.”

The results shocked me.

We will destroy ourselves if we don’t learn to love our enemies

We will destroy ourselves if we don’t learn to love our enemies We frequently go back to the past hoping to find a different future

We frequently go back to the past hoping to find a different future If romantic love is mental illness, do many of us want to be cured?

If romantic love is mental illness, do many of us want to be cured?

Murdered family cat in Arkansas is latest victim of partisan political hate

Murdered family cat in Arkansas is latest victim of partisan political hate Unexpected phone call can turn world from happy to miserable

Unexpected phone call can turn world from happy to miserable Love drives us mad, but madness rescues us from ‘horrible sanity’

Love drives us mad, but madness rescues us from ‘horrible sanity’

3 years after my father’s death, happy memories getting stronger

3 years after my father’s death, happy memories getting stronger The Alien Observer podcast heads to Planet Earth in weeks to come

The Alien Observer podcast heads to Planet Earth in weeks to come Chance encounter with woman leaves me grateful for my health

Chance encounter with woman leaves me grateful for my health