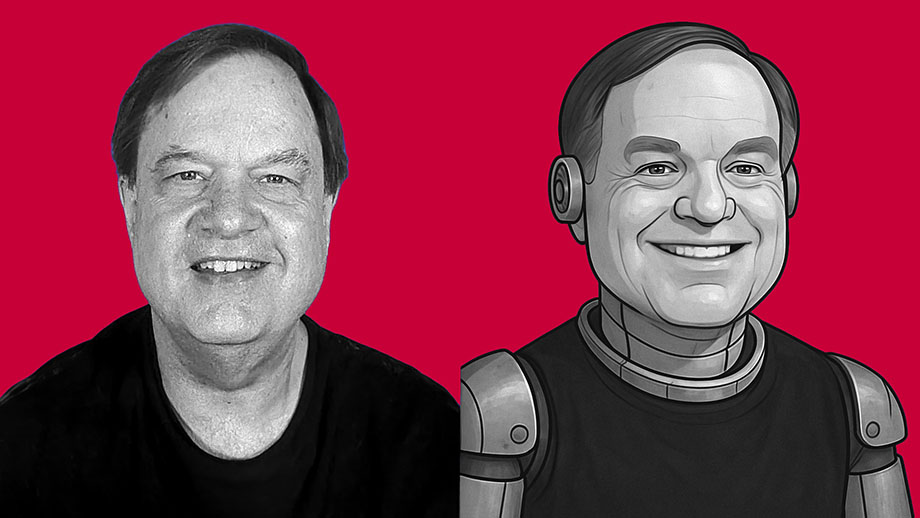

I’m not sure whether I’m more impressed by the technical proficiency of the software developers behind ChatGPT — or if I’m more alarmed by the dystopian future that I see such software leading to.

What we call “artificial intelligence” is nothing but software. It isn’t intelligent. It has no consciousness. It has no actual awareness or understanding of what it produces. It’s just lines of computer code written to produce material that mimics human behavior. If you think of AI as some form of semi-consciousness, you’re buying into science fiction. This is nothing but software written by clever people — and it’s nowhere near as “smart” as you’ve been led to believe.

But AI software — such as ChatGPT and its competitors — is getting better and better at spitting out content that mimics what a human might have created with real thought. And I think this is dangerous.

As an experiment, I asked ChatGPT to create an essay in my own writing style. I didn’t give it a subject. This is the only instructions I gave the software: “Write an 800-word essay in the same style used by the writer of davidmcelroy.org.”

The results shocked me.

China’s one-child policy: Unintended consequences on a grand scale

China’s one-child policy: Unintended consequences on a grand scale The things we regret the most show us what we really value

The things we regret the most show us what we really value

AUDIO: Spark between two hearts can be beautiful mystery of love

AUDIO: Spark between two hearts can be beautiful mystery of love Today’s group hatred says world hasn’t learned Auschwitz lessons

Today’s group hatred says world hasn’t learned Auschwitz lessons Sometimes we don’t really notice perfect match ’til it’s far too late

Sometimes we don’t really notice perfect match ’til it’s far too late

VIDEO: Brief tour of new studio

VIDEO: Brief tour of new studio Parent has to realize a child isn’t just miniature version of himself

Parent has to realize a child isn’t just miniature version of himself Spooky stories: My friends share their real-life weird experiences

Spooky stories: My friends share their real-life weird experiences